An AI attractiveness test is a digital mirror reflecting data, not desire. It turns the human experience of appeal into a cold score, leaving us more confused than flattered.

We click, we upload, we wait. A number appears. It feels definitive, scientific, final. But what just happened? You haven’t been judged by a person, or even by an intelligence that understands what judgment means. You’ve been processed by a pattern-matching machine. The allure is undeniable—a quick, seemingly objective answer to one of life’s most subjective questions. Yet the result often lands with a thud, a strange artifact that tells us less about our faces and more about our moment: a time when we willingly outsource intimate evaluations to algorithms we don’t understand.

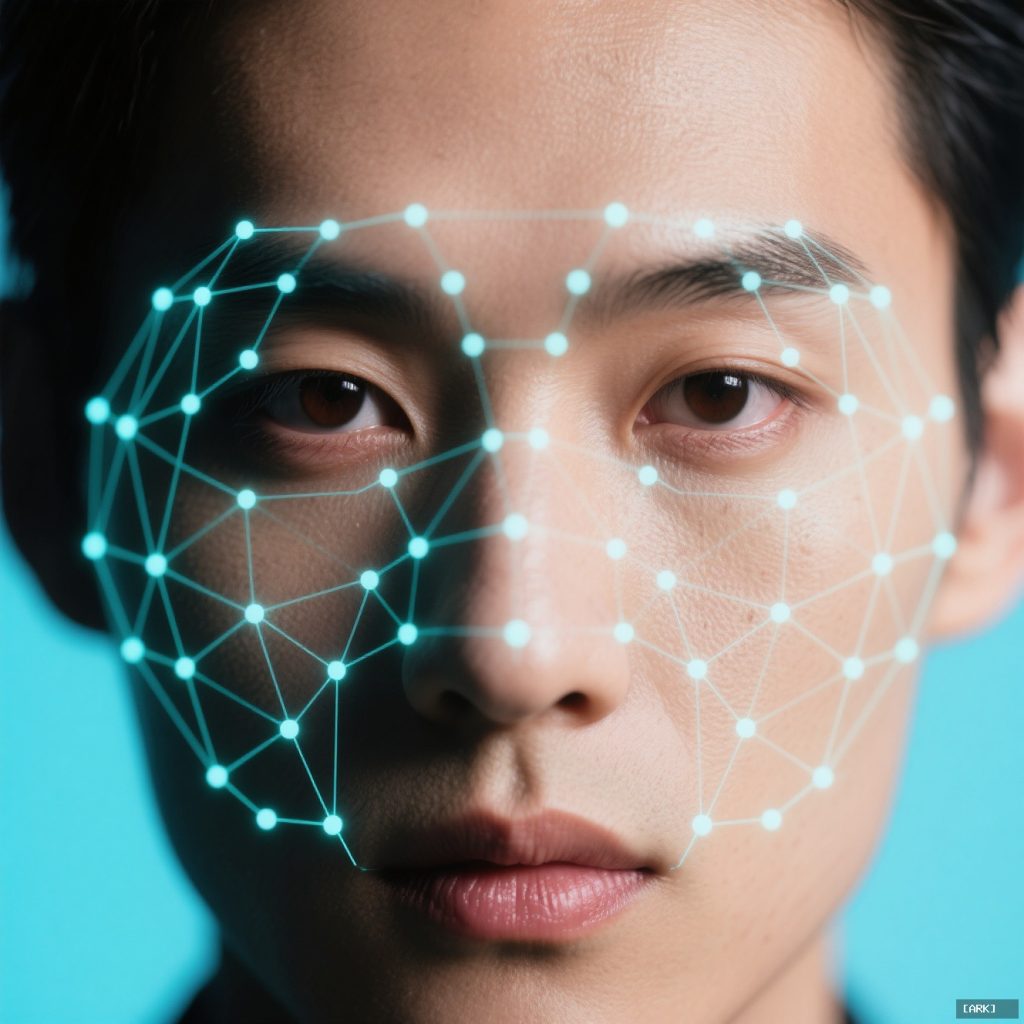

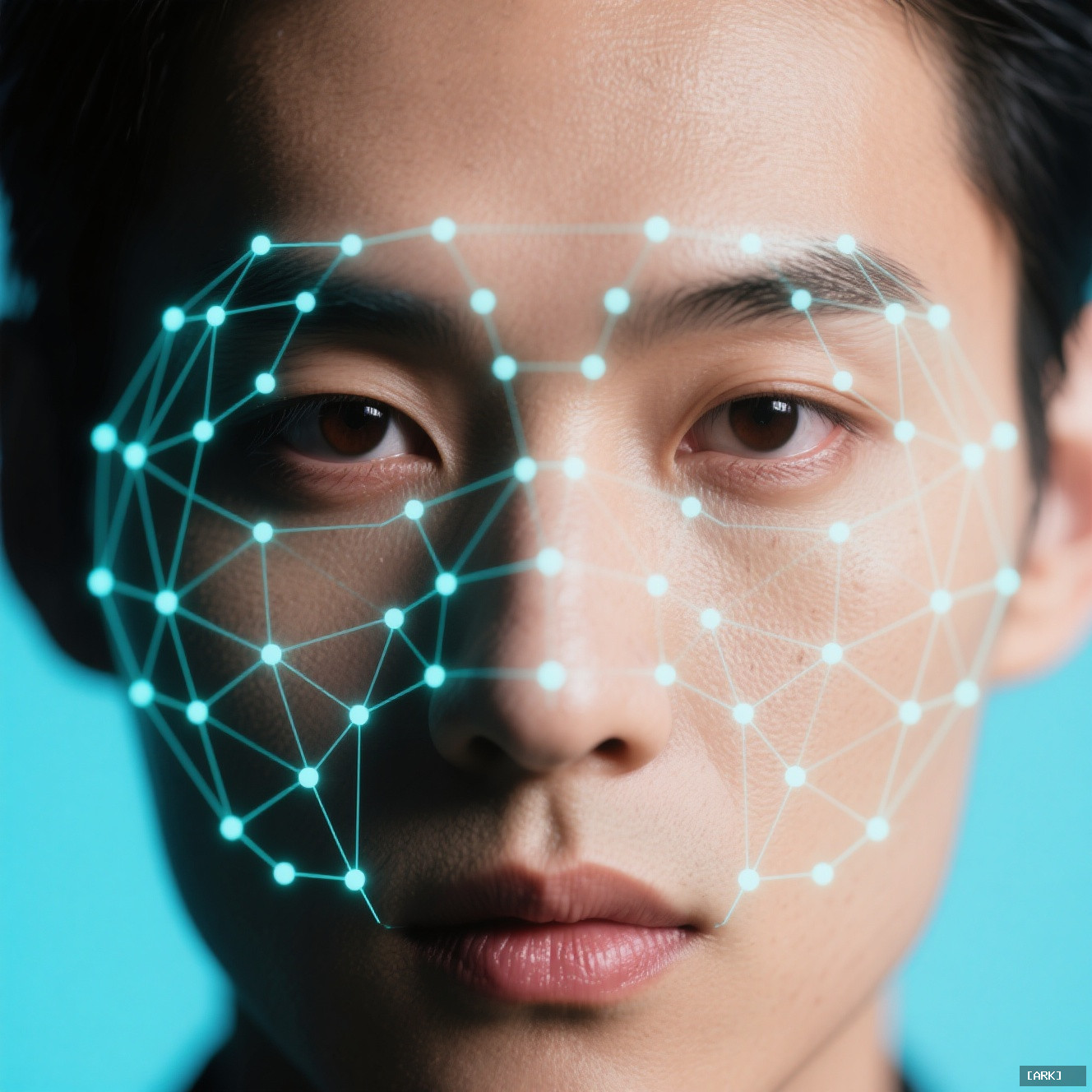

The Mechanics of the Digital Gaze

So, what is an AI beauty assessment actually measuring? Forget romantic notions of beauty. It’s measuring conformity.

The system is a complex statistical model trained on vast datasets of human-labeled images. People—often low-paid contractors—have previously scored these photos. The algorithm’s sole task is to reverse-engineer their decisions. It scans your uploaded photo, isolating your face as a set of mathematical points and textures. It hunts for patterns statistically linked to high scores in its training library: facial symmetry, the distance between your eyes relative to your nose, the smoothness of skin tone, the proportions of your jawline. It’s a geometric and photometric audit.

This is why lighting or photo quality warps your score so easily. Harsh shadows distort landmarks. A pixelated image blurs the data. You’re not being scored on your three-dimensional, moving, expressive self. You’re being scored on a flat digital file, a representation as limited as a passport photo. The algorithm has no concept of your smile’s warmth, the intelligence in your eyes, or the way you carry yourself. It’s like judging a novel by counting the frequency of the letter ‘E’.

Why the Score Feels So Alien

That feeling of dissonance—the “this is wrong” sensation—is the core of the experience. It’s the clash between quantitative output and qualitative life.

Your self-perception is a rich mix woven from memories, emotions, relationships, and the feedback you’ve absorbed over a lifetime. An algorithm’s perception is a spreadsheet. It cannot appreciate charisma, wit, kindness, or the magnetic pull of someone’s presence. It misses everything that makes human attraction dynamic and mysterious. The unsettling truth isn’t that the machine is wrong about beauty. It’s that the machine isn’t even operating in the same universe as beauty. It’s performing a narrow technical analysis and slapping a label on it that we, with our human need for meaning, misinterpret as a profound verdict.

The Bias Inherent in the Code

Ask if these systems are biased, and the answer is a resounding yes. They are mirrors, but they reflect a distorted past.

An algorithm has no intent, but it perfectly amplifies the biases in its training data. If that dataset over-represents young, white, Western faces labeled as attractive, the machine attractiveness scoring will learn that those features are the gold standard. It will penalize faces that deviate from that narrow norm. Landmark audits, like the Gender Shades project, have proven how commercial facial analysis systems fail dramatically on women with darker skin tones. The algorithm doesn’t see diversity; it sees statistical outliers.

This creates a feedback loop of beauty standards. Historical preferences, cultural stereotypes, and the subjective opinions of past labelers get baked into the code. The system then spits them back out, dressed in the authoritative cloak of mathematics. It’s a beauty pageant judged by the aggregated ghosts of past judges, perpetuating their limitations indefinitely.

The Real-World Ripple Effects

Beyond the personal curiosity hit, this technology is seeping into practical domains. Plastic surgeons may use algorithmic appeal evaluation to simulate a nose job or chin augmentation, showing a patient a “mathematically optimized” version of their face. Some dating apps experiment with AI to suggest your “best” profile photo—the one that garners the most swipes based on pattern recognition.

The danger here is ontological slippage. We start mistaking a diagnostic tool for a definitive truth-teller. When a system designed to predict clicks gets repurposed as an oracle of personal value, we cross a line. Its utility is technical—analyzing pixels for predictable patterns. We wrongly assign it existential weight.

And it affects how people see themselves. For a teenager already navigating the minefield of adolescence, a low score from an “objective” AI can be a devastating data point. It promotes a single, quantifiable axis of evaluation in a realm that is gloriously multidimensional. It reduces the complex calculus of human connection to a simple number, like valuing a home solely by its square footage while ignoring the light, the flow between rooms, the feeling of belonging it creates.

How to (Actually) Interpret Your Score

The only healthy way to interpret your score is with profound indifference. Treat it as you would a random number generator.

That number tells a story about the algorithm’s training data, its creators’ choices, and the limits of its perception. It does not tell a story about you. A low score is not a verdict; it’s a mismatch. It’s like scoring poorly on a trivia quiz about a television show you’ve never watched. The result reflects the boundaries of the test, not your intelligence or worth.

If you find yourself wanting to “improve” your score, you’re playing a different game. You can optimize a photo for the machine: perfect frontal lighting, neutral expression, high resolution. But in doing so, you’re not enhancing your attractiveness. You’re learning to pose for a specific machine’s sensor. You’re gaming a system, not engaging with humanity.

A Guide for the Curious Mind

If you’re still tempted to peek into this digital mirror, go in with your eyes open. Here’s a practical checklist.

- Interrogate your motivation. What are you truly hoping to find? Validation? A shortcut to self-knowledge? The algorithm offers neither.

- Remember the source. The score is about the AI’s data history. It’s a reflection of its past, not your present.

- Consider the bias. Who built this? What faces did it likely learn from? Assume the lens is distorted.

- Never let it guide real decisions. Do not change your dating profile, your style, or your self-concept based on this output.

- Delete and move on. Do not let the number take up residence in your mind. It is digital clutter.

Untangling Common Myths

Let’s clear the air on a few frequent questions.

Are the scores accurate?

They can be internally consistent. The same photo will likely get the same score twice. But “accurate” implies a universal truth of beauty exists for the machine to measure. It doesn’t. The score is accurate only to the algorithm’s own, flawed definition.

Which app is the best?

“Best” is meaningless here. Different apps use different training sets—one might be trained on celebrity photos, another on selfies from a particular region. Your score will vary wildly. You’re not finding the truth; you’re sampling different biases.

Do companies use this to hire people?

Using raw attractiveness scores for hiring is ethically abhorrent and illegal in many jurisdictions. While some hiring algorithms may analyze video interviews for “traits,” credible evidence for the use of pure attractiveness scoring in reputable hiring is scant. The greater risk is more subtle bias embedded in broader facial analysis tools.

The Bigger Picture

The rise of the AI attractiveness test is a symptom of a deeper craving. In an uncertain world, we long for certainty. We want clear answers, even to fuzzy questions. These tools offer the illusion of clarity—a number where there was once a mystery.

But they ask us to outsource our own perception, to distrust the evidence of our lived experience and relationships in favor of a statistical abstraction. The real test isn’t what the AI thinks of your face. The real test is whether you can look at its score, recognize it for the limited and loaded data point it is, and then close the tab. Your appeal was never in the pixels. It’s in the unquantifiable, algorithm-proof space where real human connection happens.

Sources & Further Reading

To understand the technical foundations and ethical stakes of facial assessment technology, these resources provide essential context.

- MIT Media Lab: Gender Shades project on facial analysis bias

- The Algorithmic Justice League: Unmasking AI Harms in Facial Recognition

- Nature: A study on the diversity of facial datasets

- Stanford Human-Centered AI: AI Index Report on societal impact

You may also like

Ancient Craft Herbal Scented Bead Bracelet with Gold Rutile Quartz, Paired with Sterling Silver (925) Hook Earrings

Original price was: $322.00.$198.00Current price is: $198.00. Add to cartAncient Craftsmanship & ICH Herbal Beads Bracelet with Yellow Citrine & Silver Filigree Cloud-Patterned Luck-Boosting Beads

Original price was: $128.00.$89.00Current price is: $89.00. Add to cartDouble-Sided Panda Embroidery Screen – Cantonese Embroidery Bamboo Scene Decorative Gift

Original price was: $46.70.$33.68Current price is: $33.68. Add to cartChinese Style Cultural Creative Gift Set – Panda Figurine Decor for Home, Office & International Clients

Original price was: $19.86.$17.20Current price is: $17.20. Add to cartTibetan Hand-Painted Thangka Tsatsa Box – Ethnic Style 3D Clay Sculpture Handcrafted Zhajilamu

Original price was: $41.00.$32.00Current price is: $32.00. Add to cart2026 New Chinese Style Xiangyunsha Song Brocade Silk Handbag – Gift for Mother & Elders

Original price was: $128.00.$115.00Current price is: $115.00. Add to cartShanghai Story 2025 New Silk Scarf Shawl for Women – Mulberry Silk Xiangyunsha with Gift Box

Original price was: $148.90.$136.90Current price is: $136.90. Add to cartXiao Niang ‘Cloud Drift’ Loose-Fit Gambiered Gauze Silk Chinese Style Dress XNA1177

Original price was: $360.00.$328.00Current price is: $328.00. Add to cartPmsix Tianxu Intangible Cultural Heritage Xiangyunsha Silk Printed 38th Festival Gift New Chinese Style Crossbody Handbag for Women

Original price was: $99.50.$94.50Current price is: $94.50. Add to cart